Comparing Open-Source Text-to-Speech Models

Human robot interaction only feels natural when the robot can speak back. Whether it's a voice assistant, an embodied agent, or an AI tutor, the ability to respond in natural language unlocks a fundamentally different user experience; one that's intuitive, accessible, and doesn't require users to learn new interfaces. But "natural" is doing a lot of work in that sentence. Users notice robotic prosody, unnatural pauses, and voices that don't match the context. Getting speech synthesis right isn't a nice-to-have; it's the difference between a tool people tolerate and one they actually want to use.

This post dissects four open-source TTS systems that represent distinct points in the design space: Piper (VITS-based, blazing fast, runs anywhere), NeuTTS Air (LLM + neural codec, excellent zero-shot cloning), VibeVoice (σ-VAE + diffusion, built for long-form multi-speaker content), and Chatterbox (Llama backbone + HiFi-GAN, with paralinguistic control). Rather than declaring a winner, we'll map out when each architecture shines.

Along the way, we'll build intuition for the shared building blocks (phonemizers, mel spectrograms, vocoders, tokenization strategies) and see how different design choices cascade through the entire pipeline. By the end, you'll have a mental framework for evaluating not just these four models, but the next wave of TTS systems as they emerge.

Shared Concepts Across TTS Models

Phonemes & espeak-ng

Phonemes are the smallest units of sound that distinguish one word from another (e.g., "cat" has three: /k/, /æ/, /t/). espeak-ng is an open-source, rule-based tool that converts written text into phoneme sequences. This is useful because phonemes represent how words are pronounced, bypassing tricky spelling inconsistencies (like "through" vs "threw"). Used by NeuTTS and Piper.

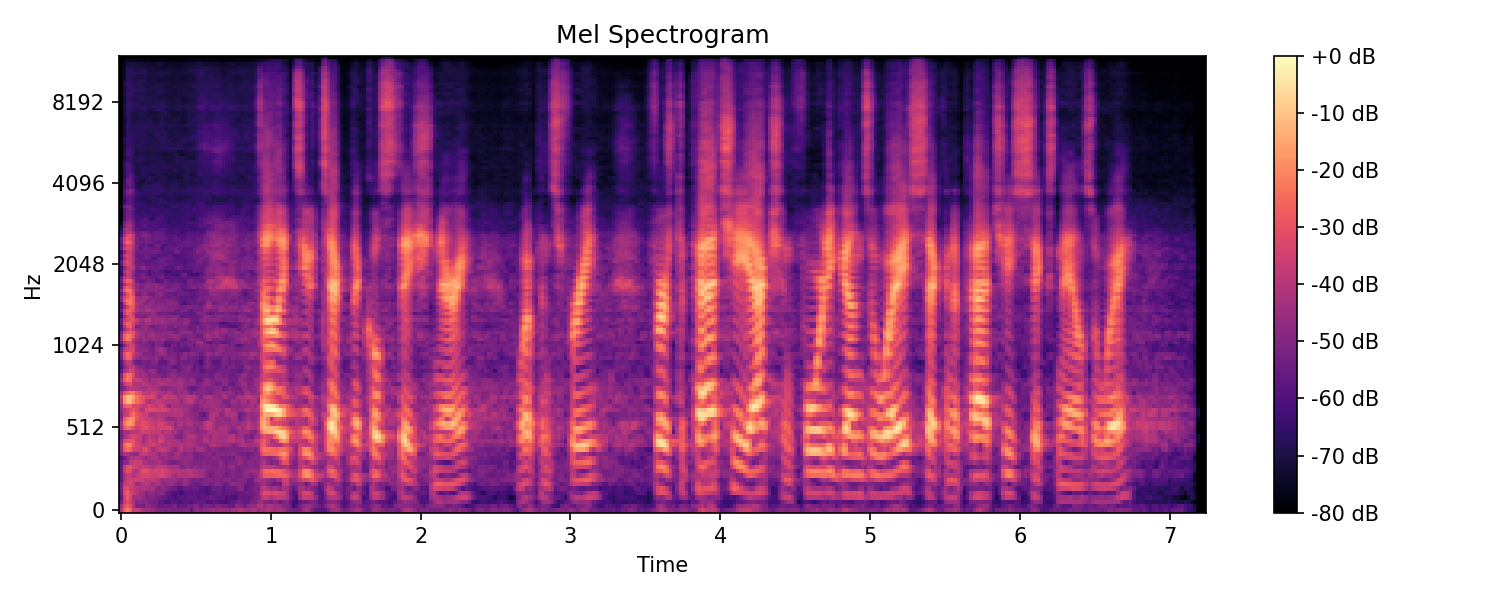

Mel Spectrograms

A mel spectrogram is a 2D representation of audio showing frequency content over time. It's created by:

- Windowing — Breaking the audio into overlapping time chunks (frames), typically 20-50ms each

- FFT — Applying a Fast Fourier Transform to each frame to extract frequency components

- Mel scaling — Mapping frequencies to the mel scale, which matches human hearing perception (we're more sensitive to differences at low frequencies)

The result shows what sounds are present but discards phase information (the exact wave shape). Many TTS systems generate mel spectrograms as an intermediate step, then use a vocoder to convert them to audio. Used by Piper and Chatterbox.

Neural Audio Codecs

Neural codecs compress raw audio into compact token sequences using learned encoder-decoder networks. NeuTTS uses NeuCodec (dual encoders for semantic + acoustic features, FSQ quantization). VibeVoice uses a σ-VAE variant that achieves 3200× compression at just 7.5 tokens/second. These enable LLMs to "speak audio" by predicting tokens instead of raw samples.

Vocoders

Vocoders convert mel spectrograms into audio waveforms. HiFi-GAN (used by Piper and Chatterbox) uses transposed convolutions to upsample from ~80 frames/sec to 22kHz+ audio, and was trained adversarially to produce natural-sounding output. Neural codec decoders (NeuTTS, VibeVoice) serve a similar role.

LLM Backbones

Modern TTS increasingly uses language model architectures. NeuTTS fine-tunes Qwen 0.5B, VibeVoice uses Qwen2.5 (1.5B/7B), and Chatterbox uses Llama (500M). These LLMs are adapted to predict audio tokens/features instead of text tokens, leveraging their ability to model long-range dependencies. Most of these models generate audio sequentially, predicting one frame/token at a time, in an autoregressive fasion. This enables coherent long-form output but limits generation speed and maximum length (bounded by context window). Piper is the exception, using a non-autoregressive VITS architecture.

Model Deep Dives

NeuTTS Air

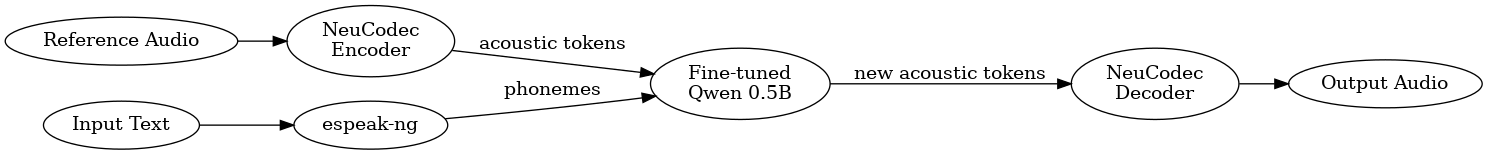

NeuTTS Air is a text-to-speech model developed by Neuphonic that brings voice cloning capabilities to edge devices. At its core is a fine-tuned Qwen 0.5B language model, making it one of the first TTS systems to leverage a general-purpose LLM for speech synthesis.

How It Works

The model operates in two stages. First, input text is converted into phonemes using espeak-ng. These phonemes, along with acoustic tokens extracted from a reference audio clip, are fed into the fine-tuned Qwen model. The LLM has been trained to predict new acoustic code tokens that represent the desired speech. Importantly, Qwen never "hears" audio directly. Instead, the reference audio is first encoded into tokens by NeuCodec, so the entire pipeline operates in a shared token space.

In the second stage, these predicted acoustic tokens are decoded back into audio by NeuCodec's decoder, producing a 24kHz waveform.

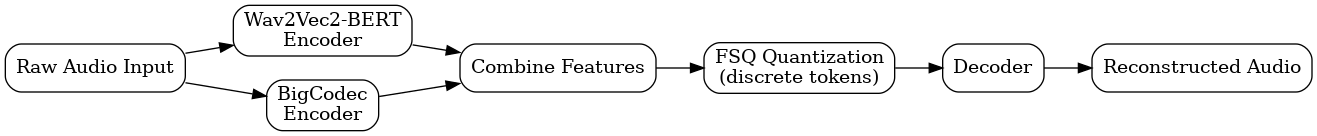

NeuCodec: The Neural Audio Codec

NeuCodec uses a dual-encoder design where two separate encoders process the input audio in parallel:

- Wav2Vec2-BERT captures semantic and linguistic features, essentially understanding what is being said

- BigCodec captures acoustic features like timbre, pitch, and voice characteristics

The outputs from both encoders are combined and quantized using Finite Scalar Quantization (FSQ). Unlike traditional vector quantization which learns a codebook of embeddings, FSQ simply rounds continuous values to a fixed set of discrete levels. This avoids training instabilities like codebook collapse while achieving 50 tokens per second at just 0.8 kbps.

Voice Cloning

To clone a voice, you need a reference audio clip (3 to 15 seconds of clean speech) and a transcript of what is being said. The model uses the transcript to learn which sounds correspond to which parts of the audio, allowing it to apply those voice characteristics to new text.

Limitations

- Context window of ~2048 tokens limits output to roughly 30 seconds

- No prosody control (no SSML or markup support)

- espeak-ng is hardcoded to American English phonemes

- Longer content requires chunking and stitching

Piper

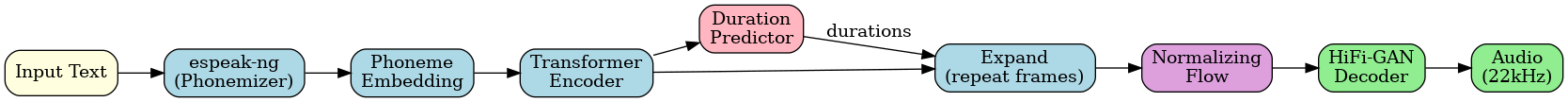

Piper is a fast, local neural text-to-speech engine from the Open Home Foundation. Built on the VITS architecture (Variational Inference with adversarial learning for end-to-end Text-to-Speech), it's designed to run efficiently on CPUs and edge devices.

How It Works

Unlike the LLM-based models, Piper is non-autoregressive, it generates the entire utterance in one forward pass rather than predicting tokens sequentially.

The pipeline has two main stages. First, input text is converted to phonemes using espeak-ng, then passed through a transformer encoder that produces a rich representation of each phoneme. A duration predictor determines how long each phoneme should last, and the encoder output is expanded (repeated) to match the audio's time resolution.

In the second stage, a normalizing flow adds natural variation to the expanded representation, and a HiFi-GAN decoder upsamples it directly to a 22kHz audio waveform.

VITS Architecture

VITS combines several components into one end-to-end model:

- Transformer Encoder processes phoneme embeddings with bidirectional attention

- Duration Predictor estimates frame counts per phoneme, trained using Monotonic Alignment Search (MAS)

- Normalizing Flow learns invertible transformations that capture speaking style variation

- HiFi-GAN Decoder uses transposed convolutions with Multi-Receptive Field Fusion to generate raw audio

During training, a posterior encoder and MAS work together to find phoneme-to-audio alignments. At inference, only the text path is used.

Voices

Each Piper voice is a separately trained ONNX model file. Voices capture timbre, accent, pitch range, and speaking style from their training data. Switching voices means loading a different model—there's no zero-shot cloning capability.

Limitations

- No voice cloning (must train or download pre-made voices)

- Limited prosody control (punctuation influences pacing)

- espeak-ng phonemization can struggle with heteronyms

- Quality depends entirely on training data for each voice

VibeVoice

VibeVoice is a text-to-speech model from Microsoft designed for expressive, long-form, multi-speaker conversational audio like podcasts and audiobooks. It can generate up to 90 minutes of speech with up to 4 distinct speakers.

How It Works

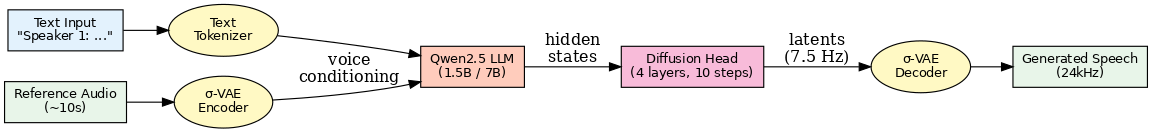

VibeVoice combines three components: ultra-low frame rate speech tokenizers, an LLM backbone, and a diffusion head.

Input text (with speaker tags like "Speaker 1: ...") is tokenized alongside voice conditioning from reference audio. The LLM (Qwen2.5, fine-tuned end-to-end) processes this context and outputs hidden states for each token position. A lightweight diffusion head then denoises these hidden states into continuous VAE latents, which are decoded into 24kHz audio.

The key insight is operating at just 7.5 tokens per second—each token represents ~133ms of audio. This means 90 minutes of speech requires only ~40,000 tokens, fitting within modern LLM context windows.

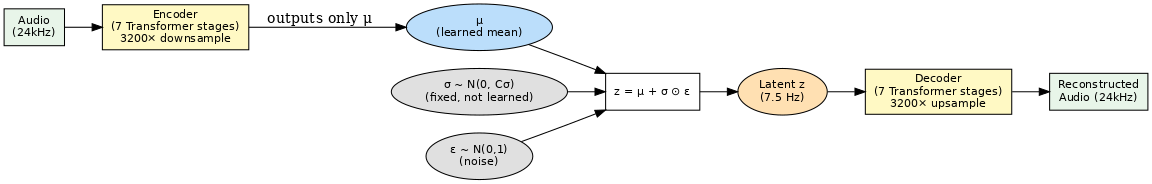

σ-VAE: The Acoustic Tokenizer

VibeVoice uses a σ-VAE variant (from LatentLM) that achieves 3200× compression. Unlike standard VAEs where the encoder learns both mean (μ) and variance (σ), the σ-VAE encoder only learns μ. The variance is sampled from a fixed prior distribution N(0, C_σ), preventing the variance collapse that plagues standard VAEs in autoregressive settings.

The architecture uses 7 stages of Transformer blocks with 1D depthwise causal convolutions (~340M parameters each for encoder and decoder).

Next-Token Diffusion

Instead of predicting discrete tokens, the LLM produces continuous embeddings that a small diffusion head (~123M params, just 4 layers) refines. At inference, it uses only 10 denoising steps with DPM-Solver++ and Classifier-Free Guidance (scale 1.3). This avoids the quality loss from discretization while remaining efficient.

Model Variants

| Model | Duration | Speakers | Voice Cloning |

|---|---|---|---|

| 0.5B Streaming | Real-time | 1 | Pre-computed embeddings only |

| 1.5B | Up to 90 min | Up to 4 | Yes (from ~10s reference audio) |

| 7B | Up to 45 min | Up to 4 | Yes (higher quality) |

Voice Cloning

For the 1.5B and 7B models, reference audio is passed through the VAE encoder to extract voice characteristics on the fly, no pre-training on specific speakers required. The 0.5B streaming model uses pre-computed embeddings for faster inference but is limited to predefined voices.

Limitations

- Maximum duration bounded by LLM context window (not architecture)

- Autoregressive generation is slower than parallel methods like Piper

- Requires ~10 seconds of reference audio for cloning

- No text-based voice description (must provide audio sample)

- Speaker tags required in input text for multi-speaker output

Chatterbox

Chatterbox is a family of open-source TTS models from Resemble AI, built on a Llama backbone and trained on over 500,000 hours of audio. It offers zero-shot voice cloning and paralinguistic control (like [laugh] and [cough] tags).

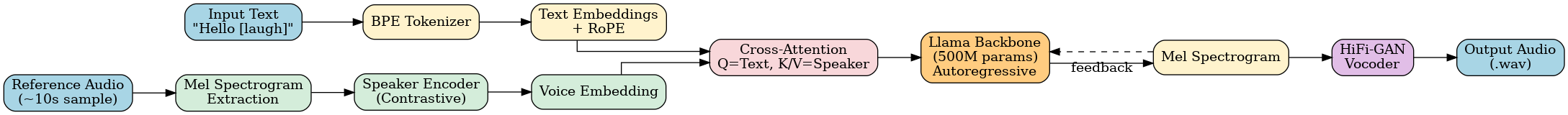

How It Works

Text is tokenized via BPE and converted to embeddings with RoPE positional encoding. Reference audio (~10 seconds) is converted to a mel spectrogram, then passed through a speaker encoder (trained with contrastive loss) to extract a voice embedding.

These two streams merge via cross-attention: text embeddings form the Query, while the speaker embedding is projected into separate Key and Value representations. The Llama backbone (500M params) then autoregressively generates mel spectrogram frames, each frame conditions on text, speaker, and all previously generated frames.

Finally, a HiFi-GAN vocoder upsamples the mel spectrogram to a 22kHz audio waveform.

Model Variants

| Variant | Parameters | Key Features |

|---|---|---|

| Chatterbox (original) | 500M | English, CFG & exaggeration tuning |

| Chatterbox-Turbo | 350M | Distilled 1-step decoder, paralinguistic tags |

| Chatterbox-Multilingual | 500M | 23+ languages, zero-shot cloning |

The Turbo variant uses a distilled decoder that generates mel spectrograms in one step instead of 10, significantly improving speed.

Voice Cloning

Provide ~10 seconds of reference audio and Chatterbox extracts speaker characteristics via the speaker encoder. No transcript of the reference is needed (unlike NeuTTS).

Limitations

- Context window limits output to ~50 seconds (depends on mel frame rate)

- Longer content requires chunking and crossfade stitching

- Autoregressive generation is slower than parallel methods like Piper

- Built-in PerTh watermarking (may or may not be desirable)

Model Comparison

| Feature | Piper | NeuTTS Air | VibeVoice 0.5B Streaming | VibeVoice 1.5B | Chatterbox |

|---|---|---|---|---|---|

| Architecture | VITS (non-AR) | Qwen 0.5B + NeuCodec | Qwen2.5 + σ-VAE + Diffusion | Qwen2.5 + σ-VAE + Diffusion | Llama 500M + HiFi-GAN |

| Parameters | ~20M | 500M | 500M | 1.5B | 500M |

| Voice Cloning | ❌ (pretrained voices) | ✅ (3-15s + transcript) | ❌ (precomputed embeddings) | ✅ (10s audio) | ✅ (10s audio) |

| Multi-Speaker | ❌ | ❌ | ❌ | ✅ (up to 4) | ❌ |

| Max Duration | Unlimited | ~30s | Real-time streaming | Up to 90 min | ~50s |

| Prosody Control | ❌ | ❌ | ❌ | ❌ | ✅ ([laugh], [cough]) |

| Generation | Parallel (fast) | Autoregressive | Autoregressive | Autoregressive | Autoregressive |

| Output Sample Rate | 22 kHz | 24 kHz | 24 kHz | 24 kHz | 22 kHz |

| Phonemizer | espeak-ng | espeak-ng | BPE tokenizer | BPE tokenizer | BPE tokenizer |

Listen & Compare

The same text was synthesized by each model:

Pilot text: "Tally 2 technical, stationary. Weapons free. First Apache, action 40, guns away. Second Apache, 6 nails away."

| Model | Voice | Audio |

|---|---|---|

| Piper | (Joe-medium) | |

| VibeVoice Streaming | (Mike) | |

| VibeVoice | (Tom Cruise cloned) | |

| NeuTTS Air | (Tom Cruise cloned) | |

| Chatterbox | (Tom Cruise cloned) |

Reference audio used to clone Tom Cruise's voice:

Conclusion

These four TTS systems represent distinct trade-offs in the design space:

- Piper delivers unmatched speed through parallel generation, but sacrifices naturalness and voice cloning

- NeuTTS Air achieves impressive zero-shot cloning with minimal reference audio (as little as 3 seconds), leveraging an LLM backbone in a compact package

- VibeVoice excels at long-form, multi-speaker content (up to 90 minutes), though its streaming variant trades quality for real-time performance

- Chatterbox balances speed, quality, and expressiveness with paralinguistic control that the others lack

In my testing, Piper and VibeVoice Streaming produced noticeably robotic output—fine for utility applications, but not for content where naturalness matters. Chatterbox hit the sweet spot: lightning-fast generation, solid voice cloning, and the ability to inject [laugh] or [cough] for more human-like delivery. NeuTTS Air is a close second, particularly impressive given its small footprint and excellent cloning quality from just a few seconds of reference audio.

The right choice depends on your constraints: edge deployment without cloning → Piper. Long-form podcasts → VibeVoice 1.5B/7B. Quick, expressive cloning with personality → Chatterbox or NeuTTS.

References

- VibeVoice — Microsoft's long-form multi-speaker TTS

- Chatterbox — Resemble AI's expressive TTS with paralinguistic control

- NeuTTS Air — Neuphonic's edge-friendly voice cloning model

- Piper — Open Home Foundation's fast VITS-based TTS

Voice cloning reference audio:

Tom Cruise acceptance speech (21–36 seconds)